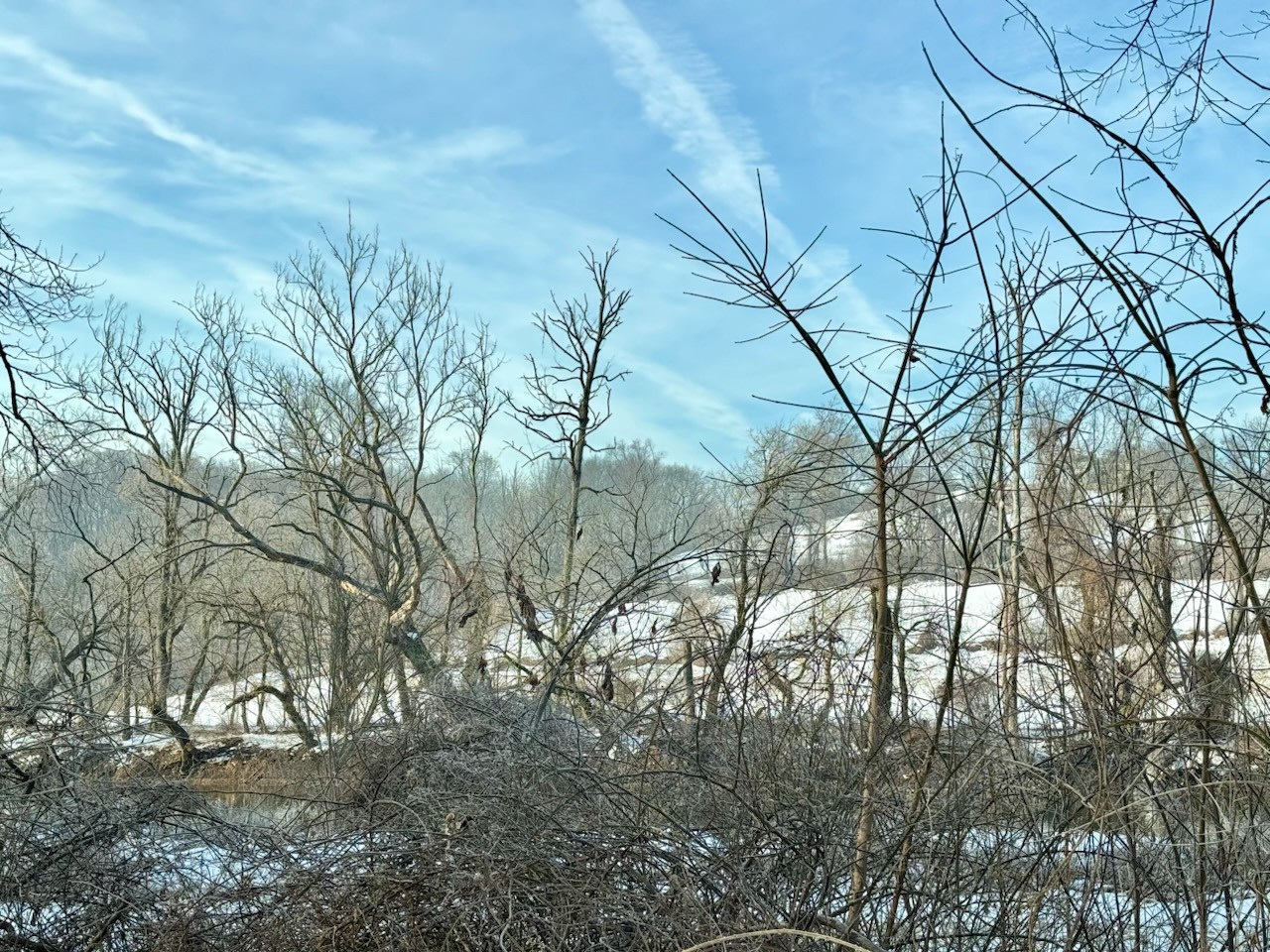

Mossy rocks in the snow.

Nomad

@wally

Blue skies coming through.

Classical music, Sleepytime tea, and Crafting Interpreters.

Ippodo matcha has gotten a bit expensive so I've been looking for alternatives. Turns out Trader Joe's matcha ain't too bad!

I love October.