Music on physical media has been the win for decades.

Nomad

@mrfresh

Cousins...

Shuttlecraft

Morning.

In the spirit of Kyle's latest watch posts, Pebble is back, via Eric Migicovsky and the new Pebble apps on iOS and Android.

https://ericmigi.com/blog/how-to-build-a-smartwatch-software-setting-expectations-and-roadmap

2014 MacBook Air - still running strong as the daily runner.

#guitaristsofnomad

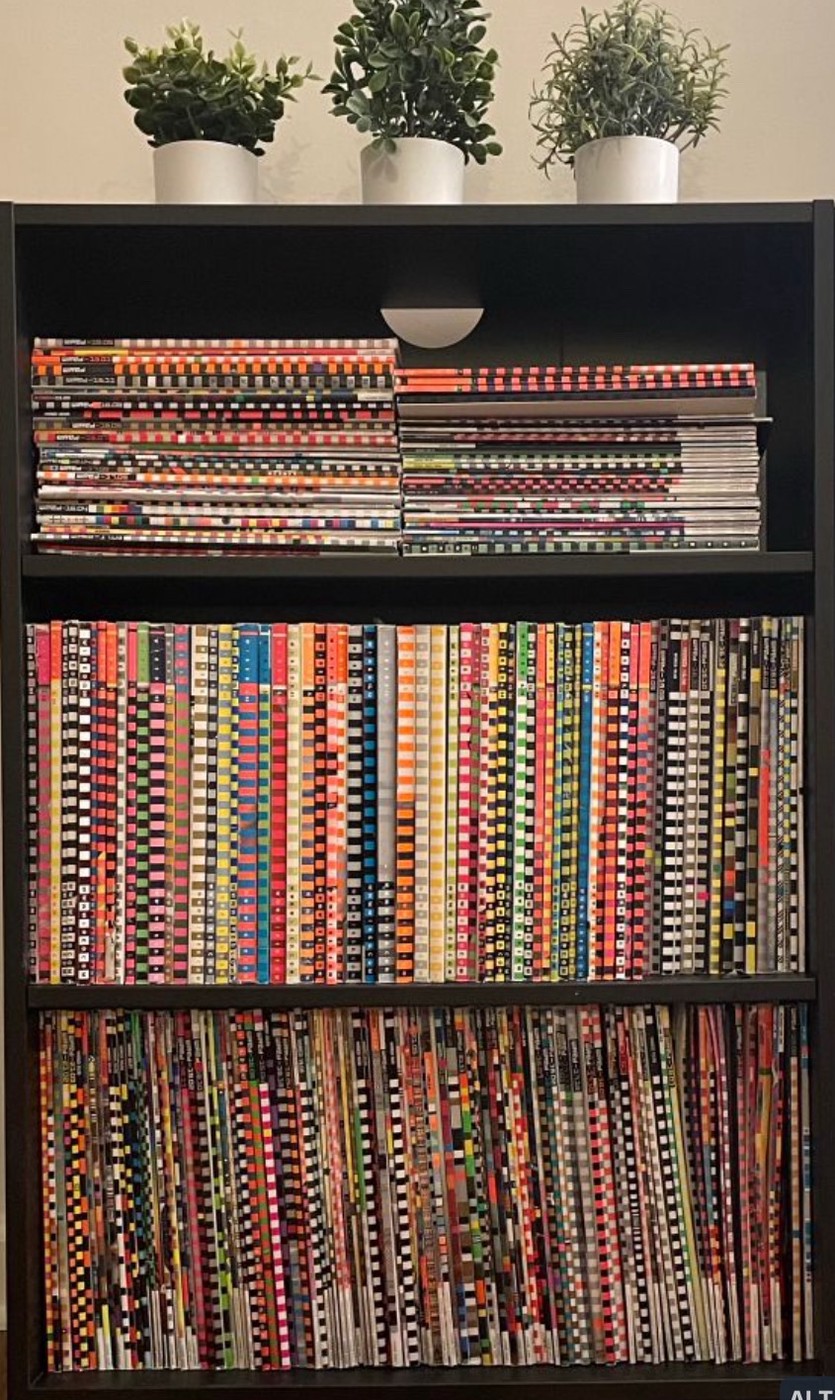

My Wired Magazine collection (1993 2nd, 3rd, and 4th issues to present (which has a great deal missing along the way).